Most of my cartographic side projects these days follow a theme: mapping elevation data in some way or another. In the past year that has included wading into some “traditional” waters—trying some modern digital hillshading following Daniel Huffman’s processes, and hand-shading follwing Sarah Bell’s processes (and Eduard Imhof’s, in turn)—but most projects have been experiements in web-based terrain maps: from simple shaded relief to fuzzy things to flowing things to contour maps. And now, to this next thing.

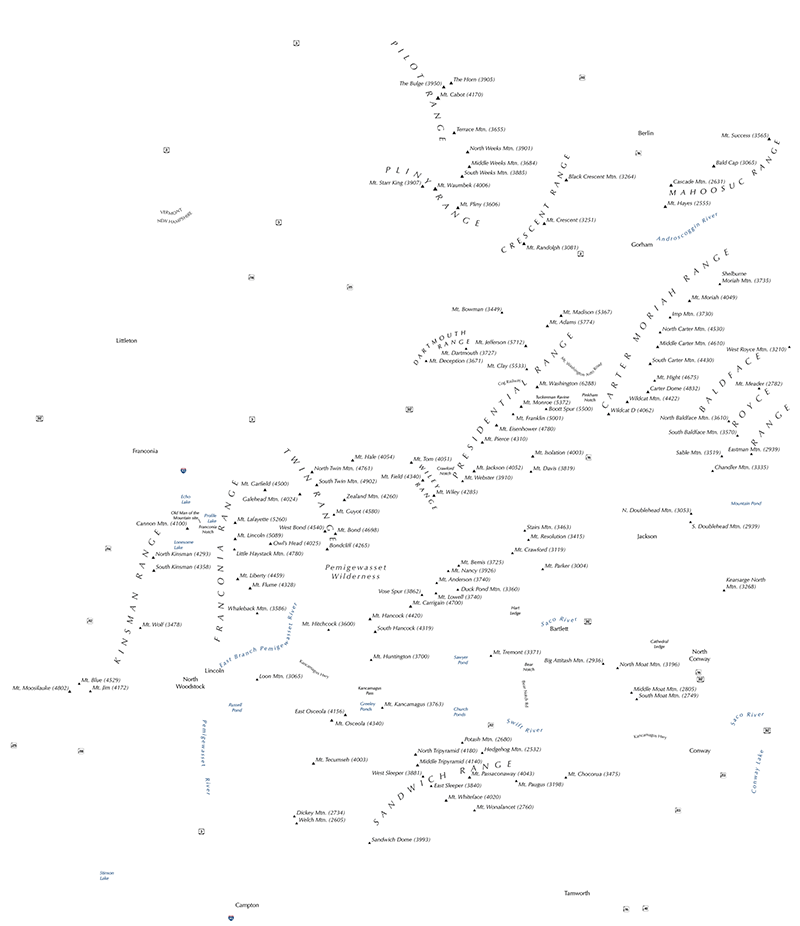

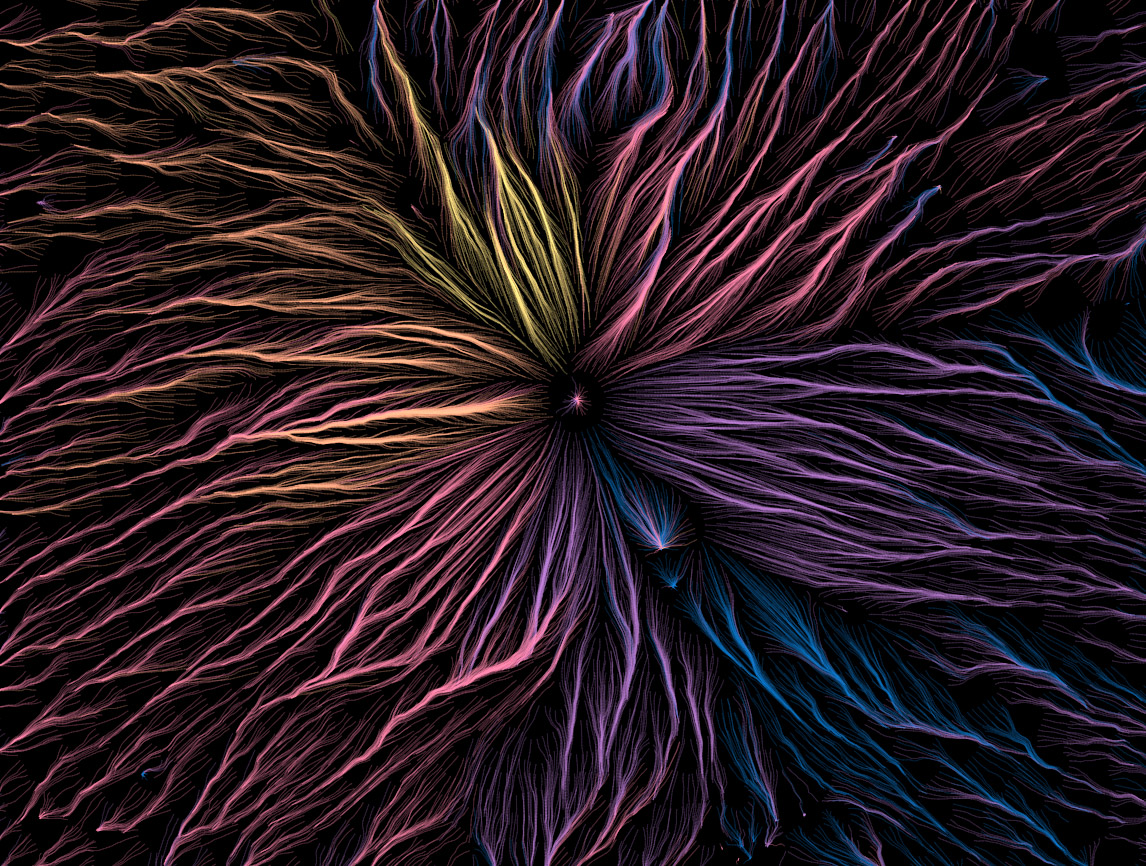

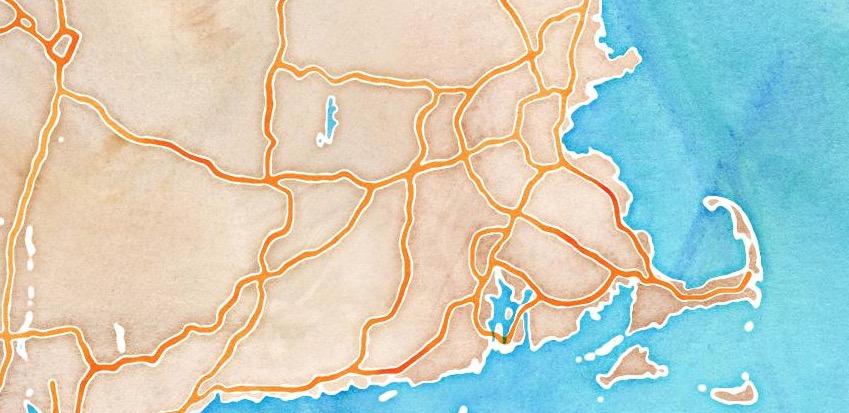

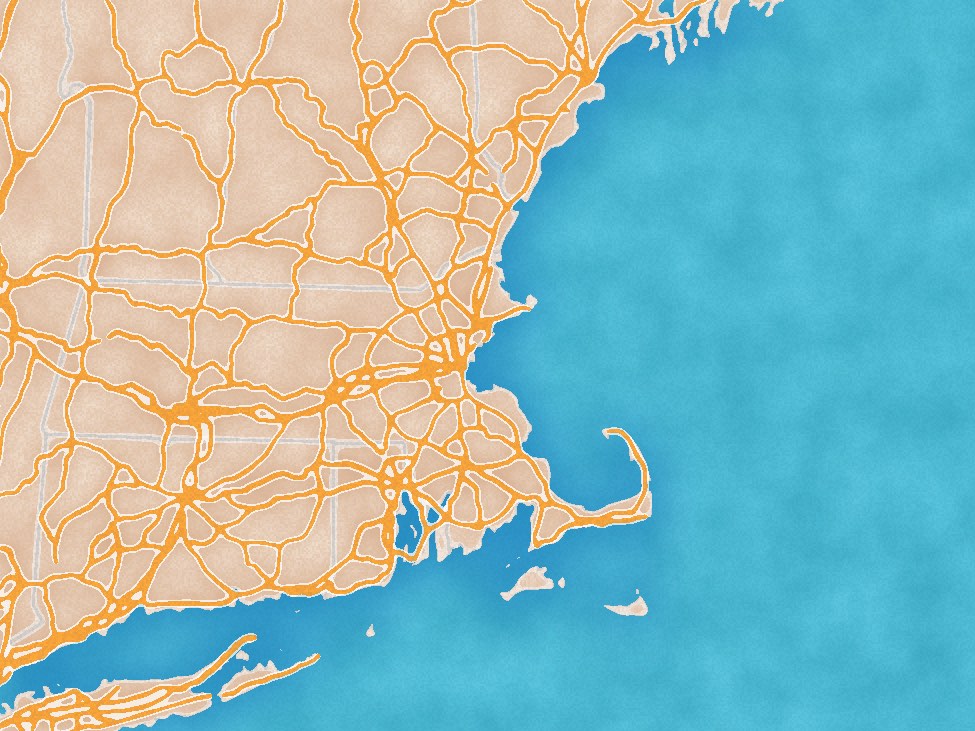

Mount Washington, New Hampshire

Mount Washington, New Hampshire

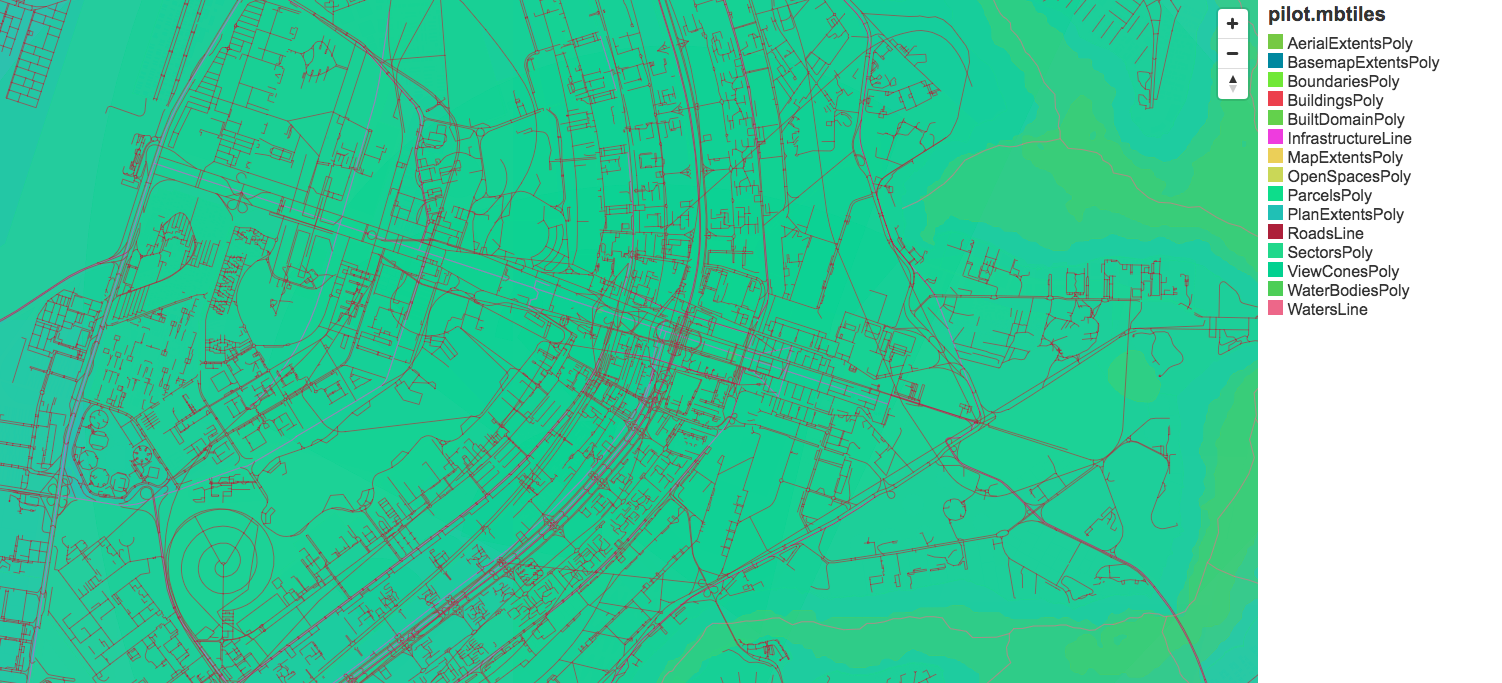

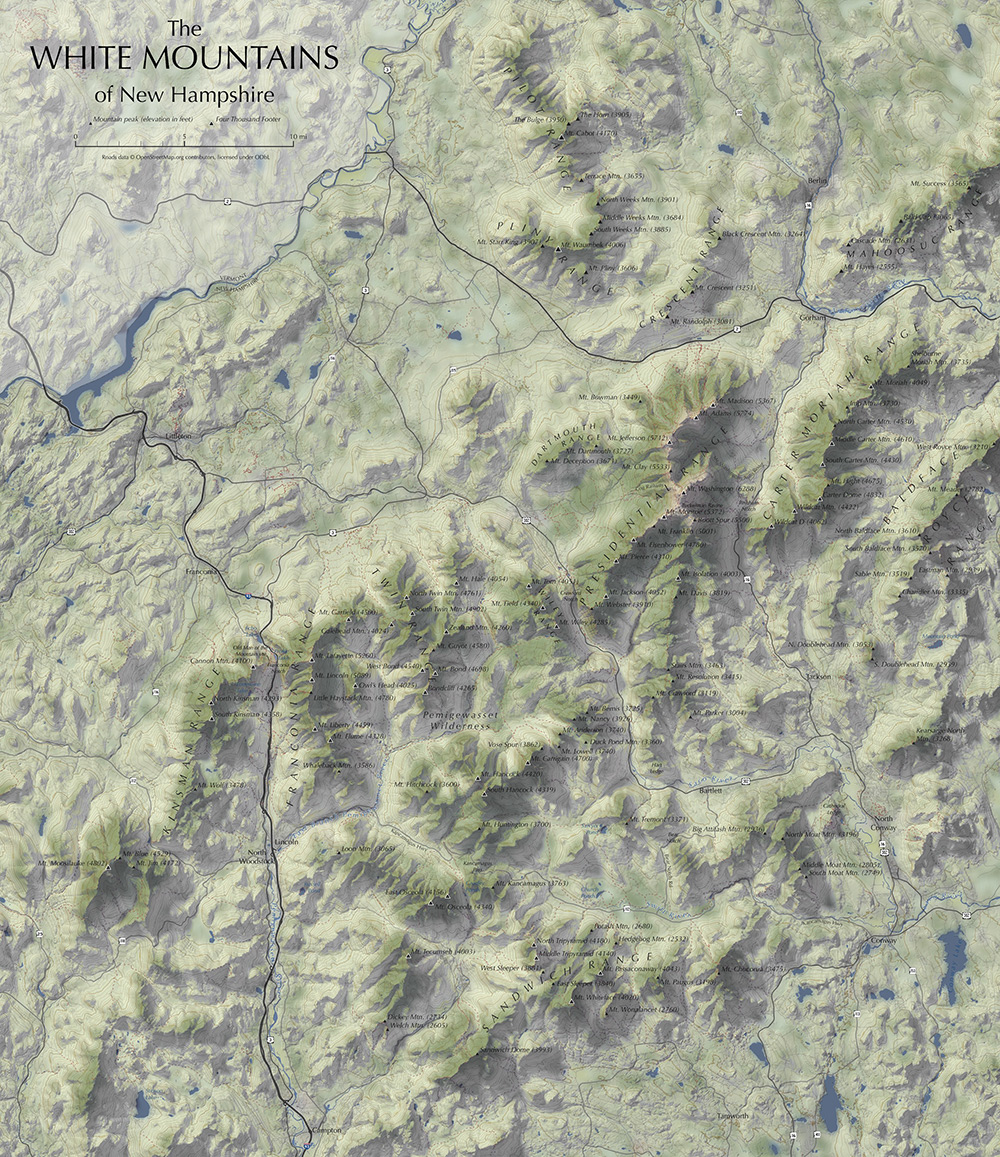

I’d just like to share a few images from what I’ve been playing with off and on for much of 2018, because they’re kind of pretty!

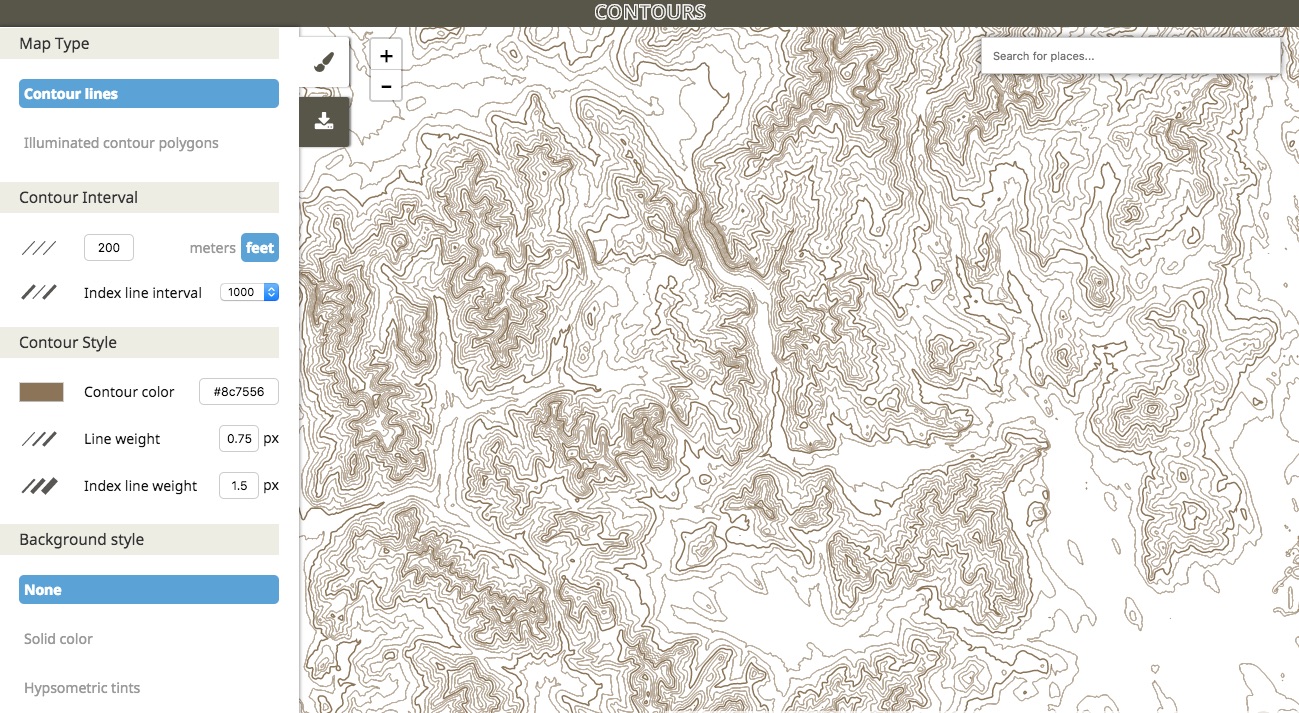

In 2017 Mike Bostock released d3-contour, a d3 module for computing contour polygons from a grid of values, such as raster elevation data. For me this opened a new avenue of fun terrain mapping in a web browser. A quasi-practical product of my efforts is a handy tool for generating and extracting topographic contours for just about anywhere. But it’s the artsy stuff that keeps me coming back.

As you might guess, these are, essentially, a collection on paths that “flow” downhill, something like a static version of the animated flows I did a while back (apologies, that currently has some issues but is still somewhat functional) with some aesthetic differences. Elevation contours are a key part of this one, however.

Attempting hachures… again

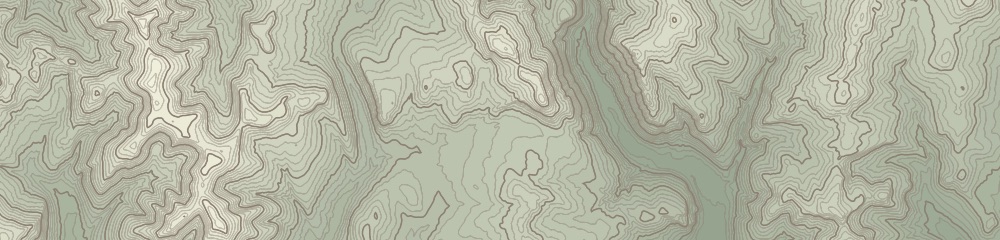

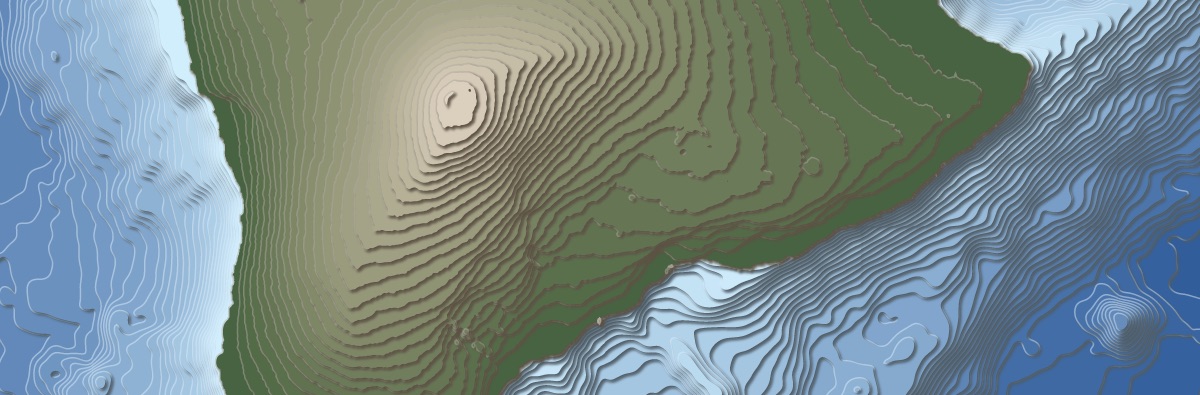

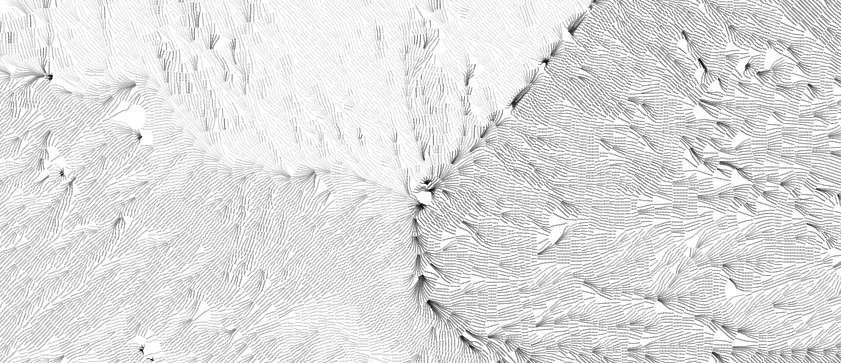

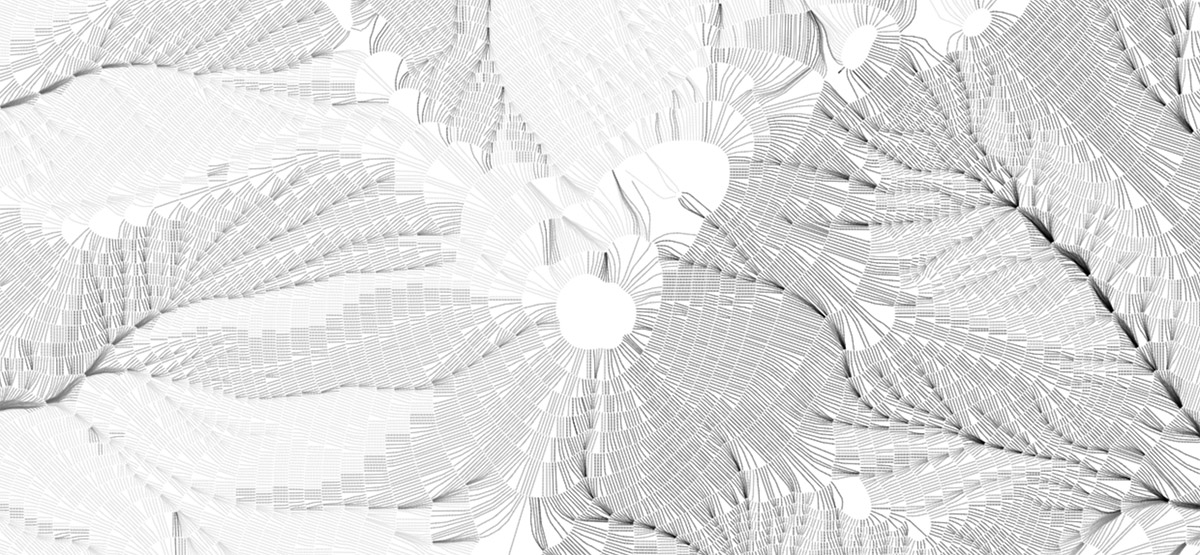

As always, I began with an attempt to draw hachures, a somewhat archaic terrain-shading technique of short, uniformly dense lines running in the direction of slope. Earlier attempts had drawn strokes in a regular grid or from random points, but now thanks to d3-contour I had a proper starting point. Randomly placed strokes running donwhill create a hachure-esque look, but real hachures are arranged in rows along regular contour intervals.

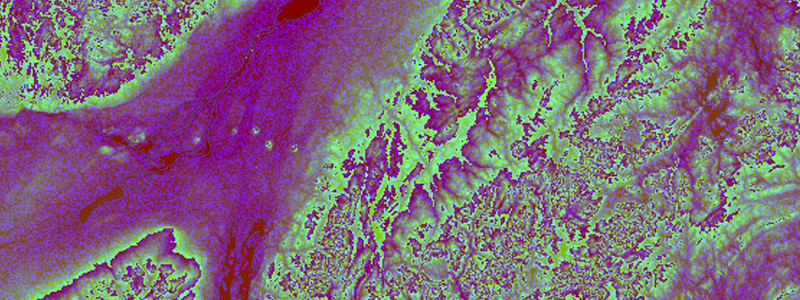

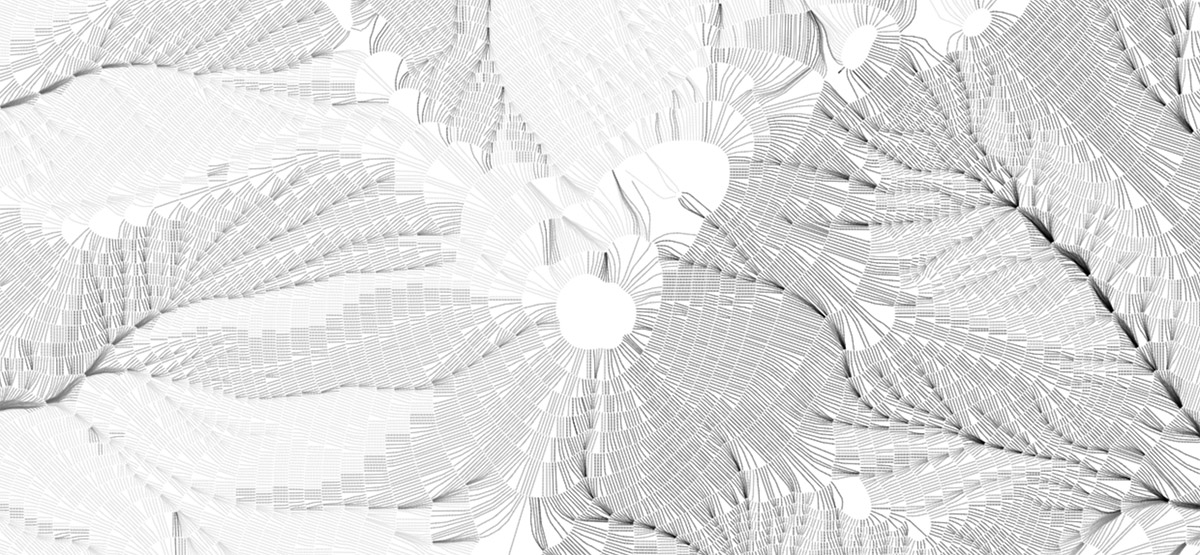

Pseudo-hachures, relatively short strokes drawn from random points. Here, masking around each line helps preserve an appearance of overall uniform density.

Pseudo-hachures, relatively short strokes drawn from random points. Here, masking around each line helps preserve an appearance of overall uniform density.

Now that I had contours, all I needed to do was draw evenly spaced marks perpendicular to each contour, right? Well as usual, it kind of works but breaks down easily. For me, at least, perhaps hachuring will always stubbornly be the same hand-drawn technique as when it was born (I’m working on that a bit, too).

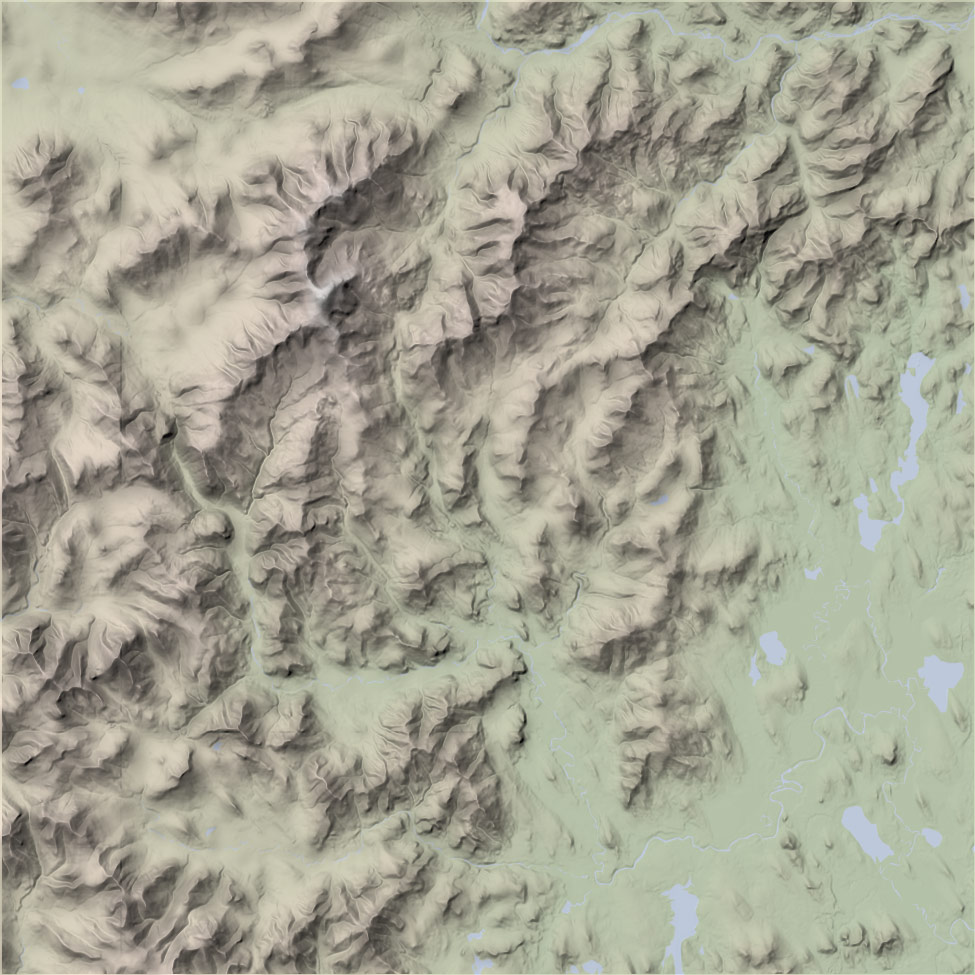

Mount Washington in an attempt at more proper, orderly hachures with some shading. It’s not terrible, but I haven’t figured out how to thin out lines that bunch up nor fill in lines that fan out.

Mount Washington in an attempt at more proper, orderly hachures with some shading. It’s not terrible, but I haven’t figured out how to thin out lines that bunch up nor fill in lines that fan out.

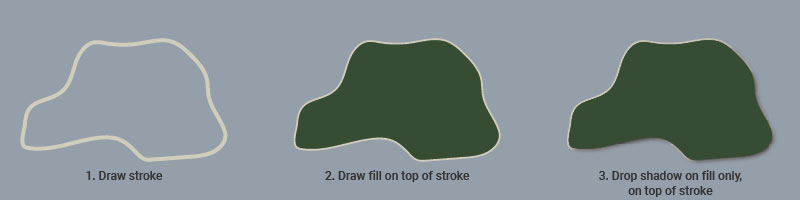

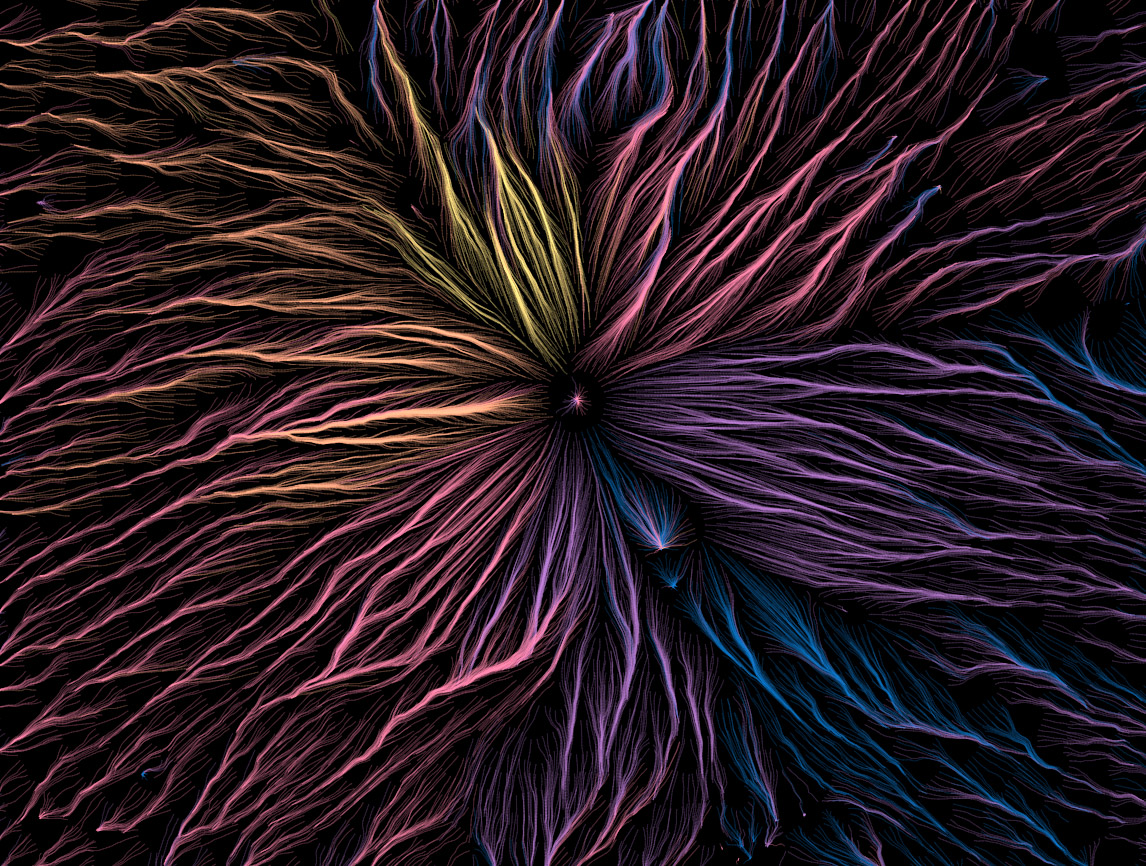

After abandoning the idea of neat, short hachure marks, it’s a short leap to what I’ve ended up with: just keep drawing the paths farther downhill and apply various colors and blending modes. There’s still a hint of order behind it all, though, as the lines still start at regular intervals along contour lines, making a smoother and more pleasing appearance than random placement would.

Same area as above (at a slightly different scale), allowing the lines to continue on downhill.

Same area as above (at a slightly different scale), allowing the lines to continue on downhill.

There are a lot of variables to play with: the contour interval, the spacing along contours, the length of paths, and the color scheme, to name a few. What works best to my eye depends on the scale and particular geography of the map, though as a general rule it’s best in mountainous but not overly rough terrain. If it’s too flat lines don’t really know where to go, and if it’s too jumbled my methods aren’t good enough to keep them “flowing” around all the obstacles—either way it looks messy. As for colors, I enjoy a dark background and somewhat vibrant colors based on the direction of flow, but your mileage may vary!

Once more, with fun colors.

Once more, with fun colors.

Mount Fuji, if I recall correctly

Mount Fuji, if I recall correctly

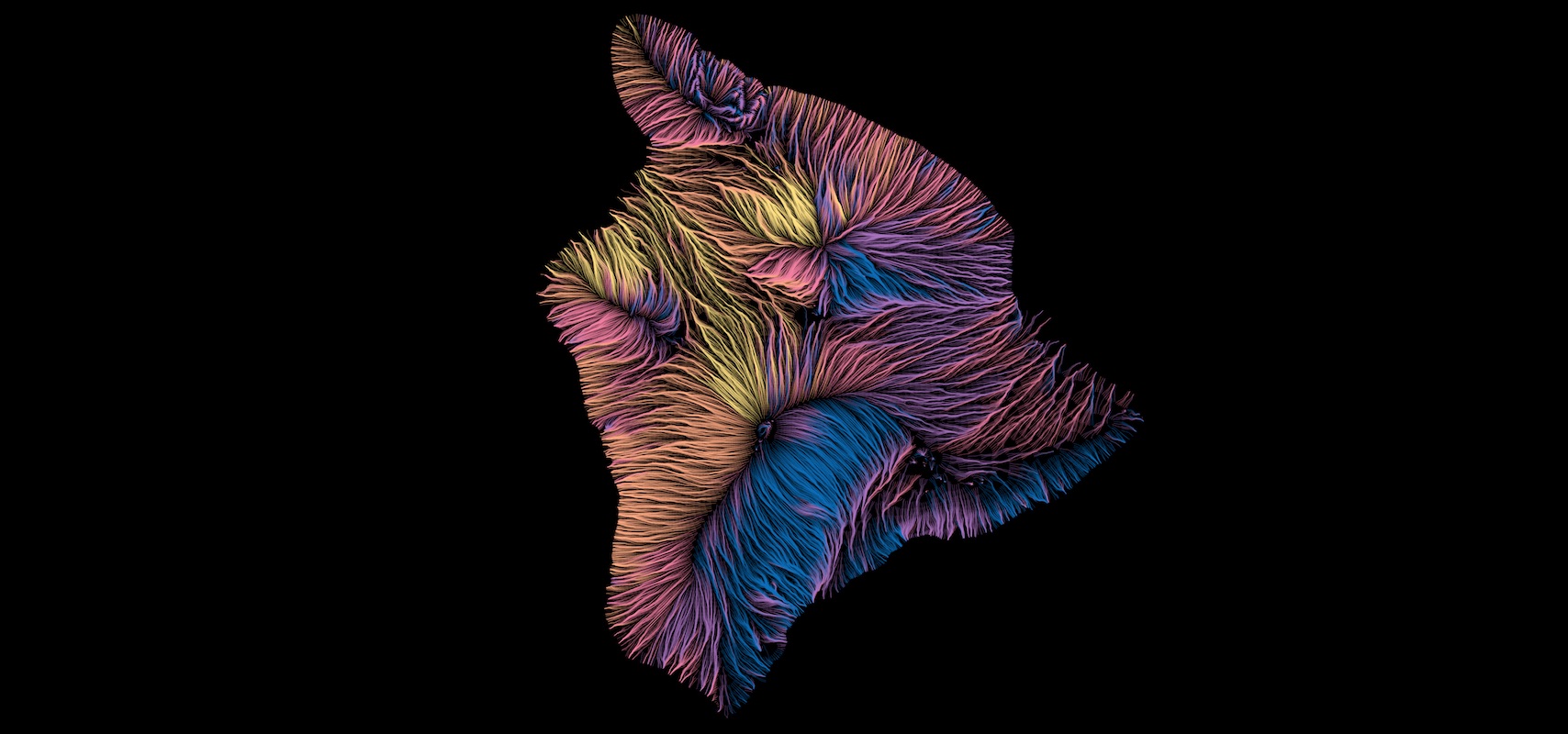

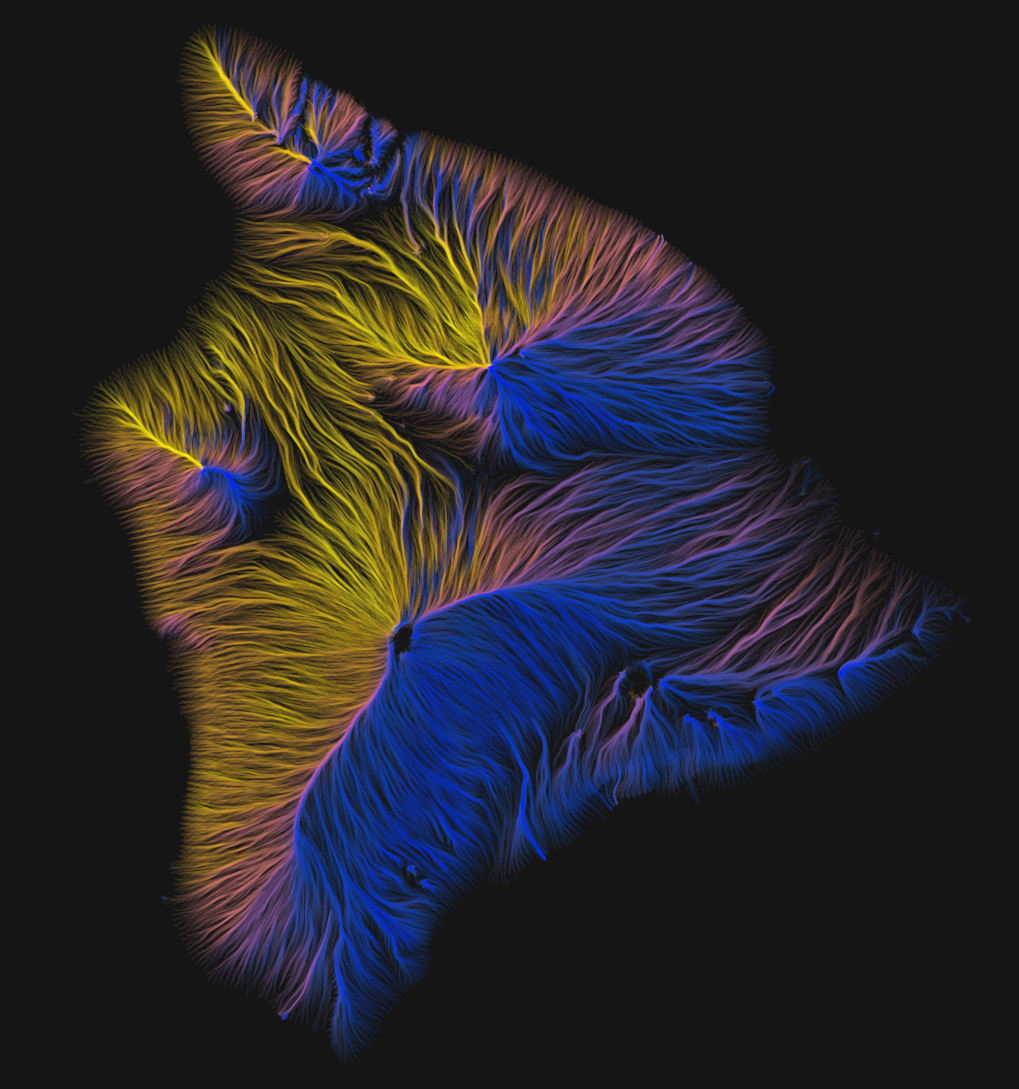

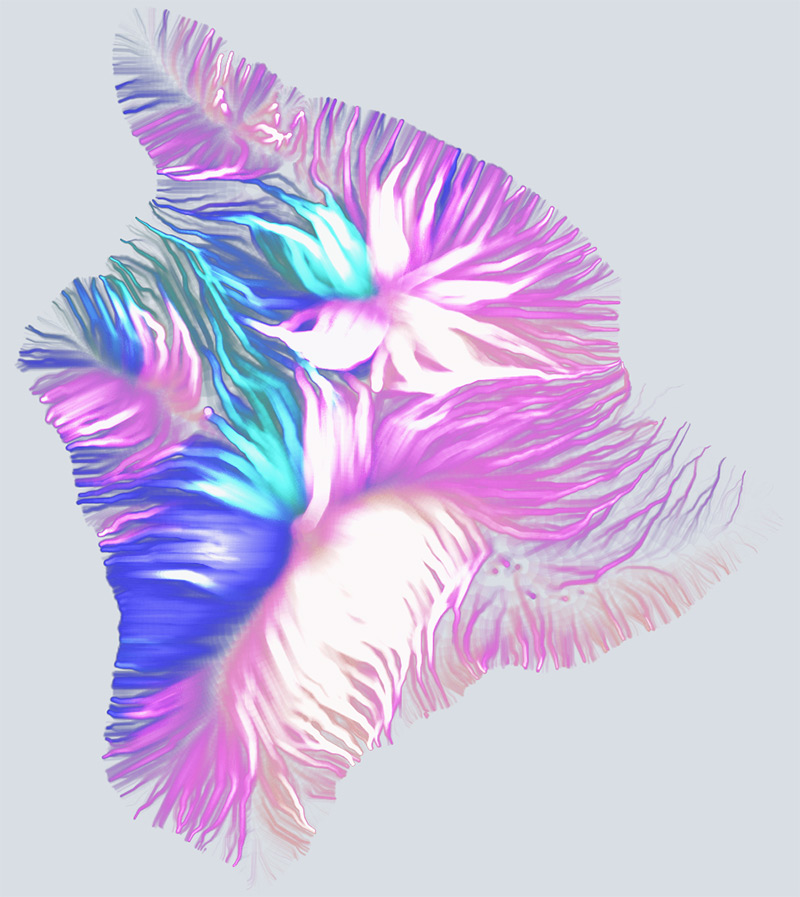

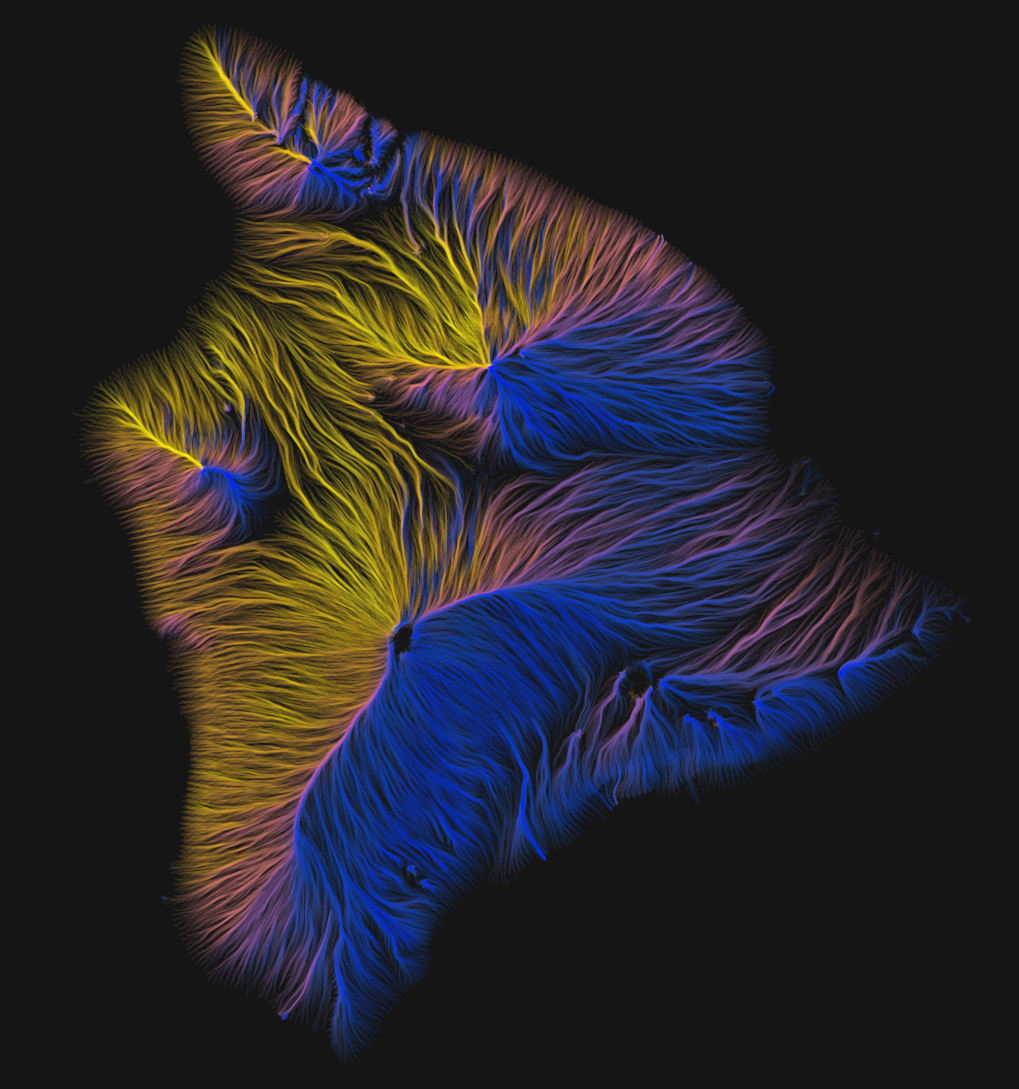

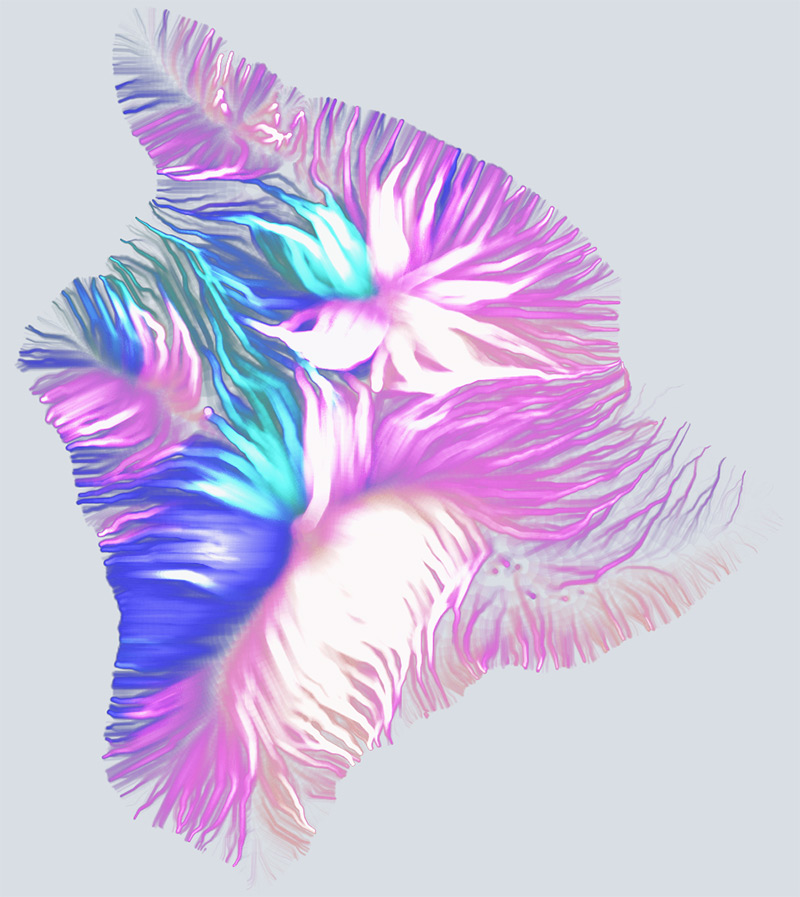

Hawaii

Hawaii

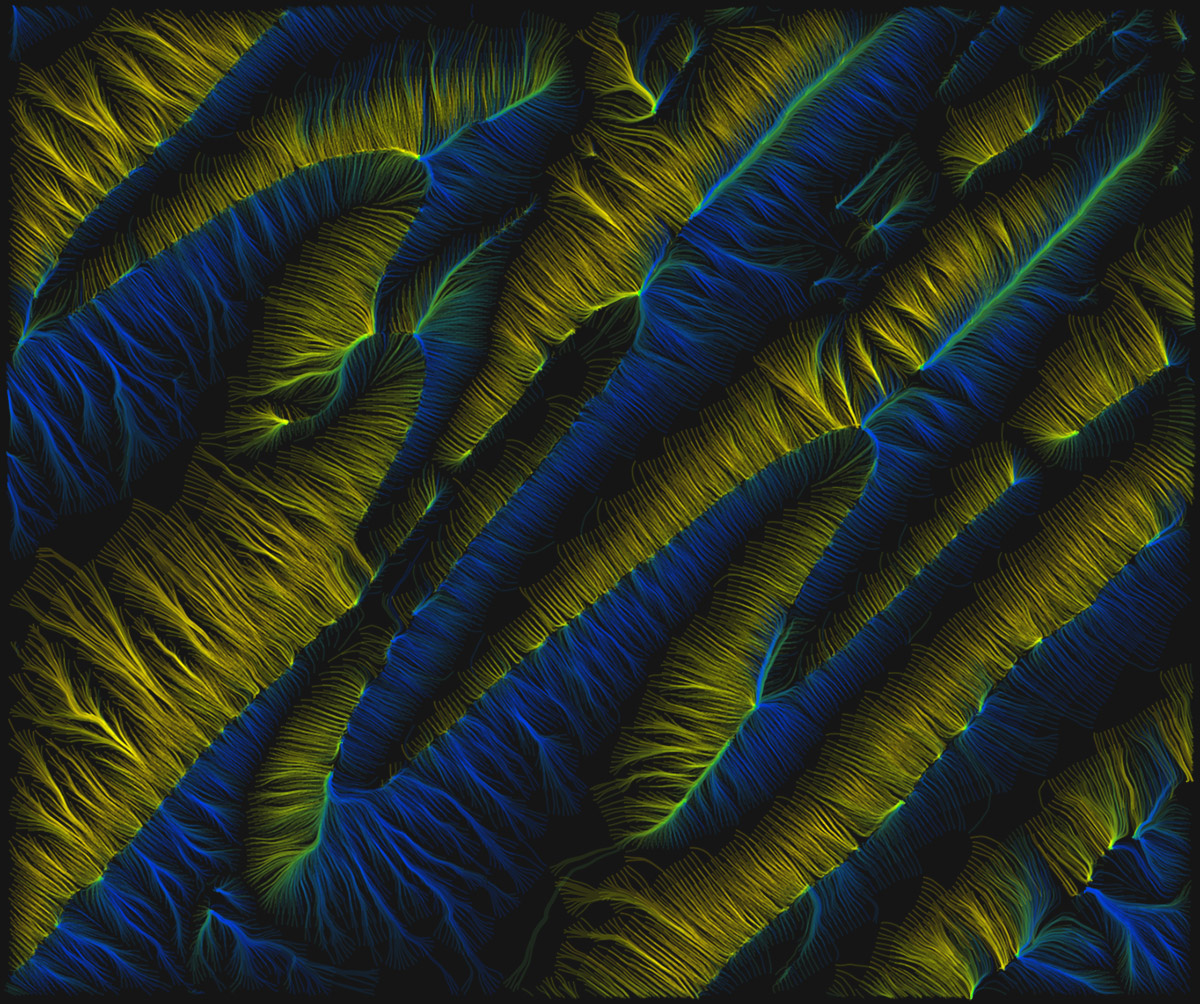

More in-your-face line widths and blending modes.

More in-your-face line widths and blending modes.

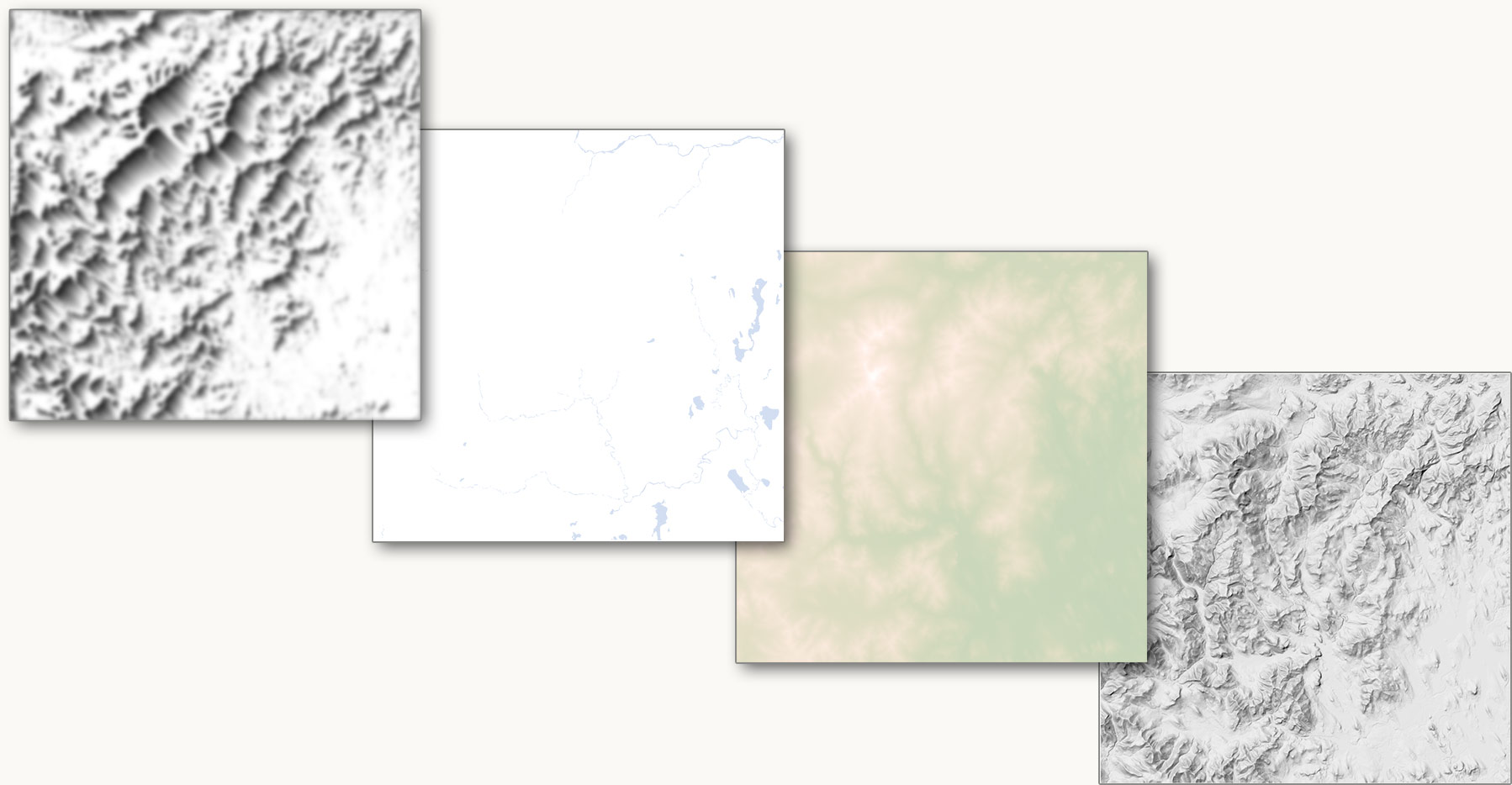

Inversion

As fun and occasionally beautiful as these images turn out to be, what kept bothering me is that most of the time my brain just couldn’t perceive a correct picture of elevation. The flows go downhill and converge into large streams, which makes sense conceptually and looks cool, but no matter what I did with colors or anything else, I could only see an inverted picture of the land. The prominent blank spaces—peaks and ridges—always looked like valleys areas.

It only dawned on me last week that a solution, if one was needed, is simply to draw lines the other way, going uphill from each contour. They start with even spacing at low elevations and begin to converge on ridgelines and summits. Finally I could see the structure of the terrain!

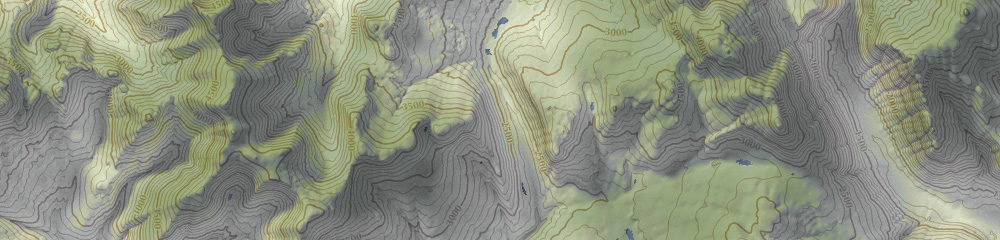

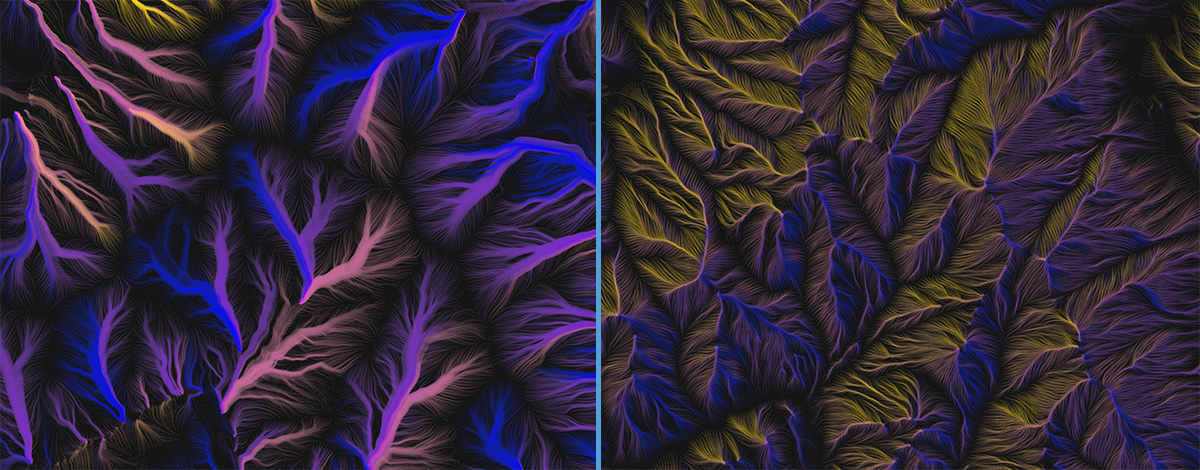

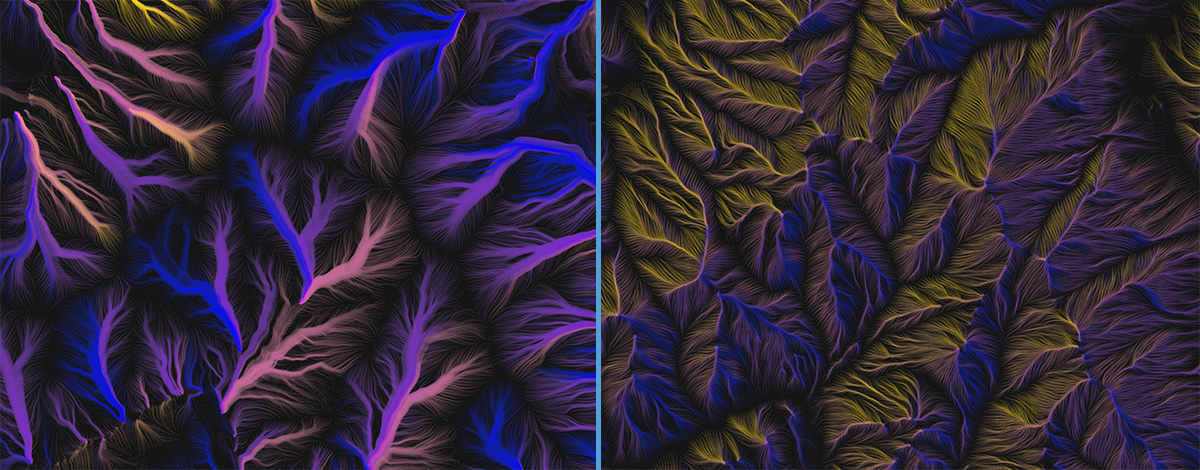

The same map extent drawn with lines running downhill (left) and uphill (right).

The same map extent drawn with lines running downhill (left) and uphill (right).

Around White Mountain Peak, California

Around White Mountain Peak, California

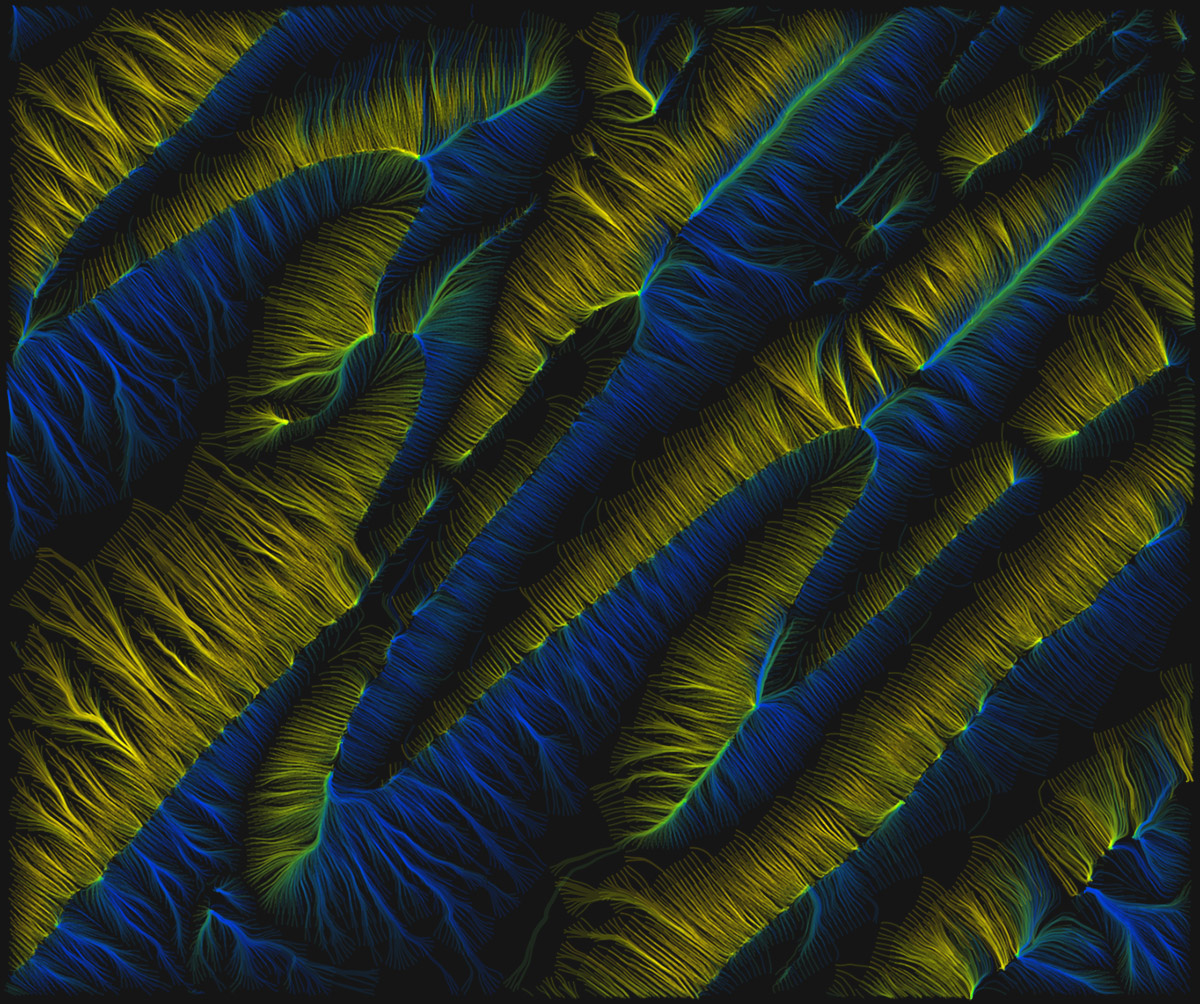

Central Pennsylania

Central Pennsylania

Technically speaking

This is still pretty experimental, and I’m not yet at the point of publishing anything, but eventually I’d like to share source code and have a thing like the contours tool or animated flow viewer where you can render a map for wherever you please. Right now it’s far too slow, and the code is borderline unreadable.

But for those interested, the gist of how this works is this:

- Get elevation data from terrain tiles and compute contours (as described in this post about the contours tool)

- Draw contours invisibly to SVG and use the

getPointAtLength method to find regularly spaced points along each contour line.

- From each of those points, start calculating a path up or downhill.

- Get the elevation and aspect at the point.

- Proceed to the next point in the direction of aspect (or opposite, for uphill) at some specified segment distance (usually 5 pixels or so).

- Repeat for the next point, and so on until an ending condition* is met.

- Calculate a mean aspect value for all the coordinates in the path. I use this for coloring each line according to the general direction in which it flows.

- Draw all paths to a canvas by feeding their coordinates to a d3 line generator with some curve interpolation applied.

* Paths could keep going until they reach the highest/lowest point or the edge of the screen, but I’ve found it best to limit them by imposing one or more conditions for ending:

- A maximum number of segments

- A maximum elevation change (e.g., a path can only climb/descend three contour intervals)

- A minimum distance traversed. Some paths otherwise get “stuck” and bounce back and forth in a confined space, resulting in distracting bright spots on the map.

After all that, it comes down to playing around with colors, blending, and some of the variables, as mentioned earlier. Should the lines be sparse or dense? Long or short? Thick or thin? Many of the images here represent my favorite settings, but it’s hard to stop trying different combinations—which is why the code is always “in progress” and messy!

Mount Washington, New Hampshire

Mount Washington, New Hampshire

Pseudo-hachures, relatively short strokes drawn from random points. Here, masking around each line helps preserve an appearance of overall uniform density.

Pseudo-hachures, relatively short strokes drawn from random points. Here, masking around each line helps preserve an appearance of overall uniform density. Mount Washington in an attempt at more proper, orderly hachures with some shading. It’s not terrible, but I haven’t figured out how to thin out lines that bunch up nor fill in lines that fan out.

Mount Washington in an attempt at more proper, orderly hachures with some shading. It’s not terrible, but I haven’t figured out how to thin out lines that bunch up nor fill in lines that fan out. Same area as above (at a slightly different scale), allowing the lines to continue on downhill.

Same area as above (at a slightly different scale), allowing the lines to continue on downhill. Once more, with fun colors.

Once more, with fun colors. Mount Fuji, if I recall correctly

Mount Fuji, if I recall correctly Hawaii

Hawaii More in-your-face line widths and blending modes.

More in-your-face line widths and blending modes. The same map extent drawn with lines running downhill (left) and uphill (right).

The same map extent drawn with lines running downhill (left) and uphill (right). Around White Mountain Peak, California

Around White Mountain Peak, California Central Pennsylania

Central Pennsylania